SWIR Use In Autonomous Cars

Autonomous cars will become the new standard very soon but there are still many limitations on their capabilities and efficiency. There have been many incidents in recent news of autonomous vehicles suddenly stopping in the middle of the road or crashing into other vehicles. This is because today's cars are using LiDAR and ToF technology that does not allow the car to differentiate objects and adapt to weather conditions.

What is SWIR?

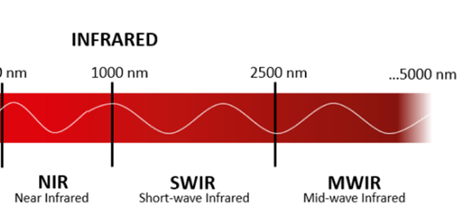

SWIR is short for Short-Wave Infrared and encompasses 1000nm-2500nm of the infrared spectrum. But others will also refer to the range 780-3000nm as either NIR or SWIR. This region has less energy than visible and UV wavelengths but light can still interact with objects. While the wavelengths are not visible by the naked eye, we can still use it to capture images that are not within visual range.

How can we detect SWIR?

Even though the infrared region is not visible, there are many ways to detect it. A few methods that researchers have developed to detect it are: InGaAs (indium gallium arsenide), QDIP (quantum dot infrared photodetector), and CMOS (complementary metal oxide semiconductor).

InGaAs

InGaAs photodetectors are the most popular material that is used for NIR and SWIR detection. It uses a p-n junction to detect longer wavelength ranges, drawback is that it has a smaller bandgap energy than silicon photodetectors. This sensor can be made to be highly sensitive but it is quite expensive to produce and is limited in the size and number of its pixels.

QDIP

Quantum dots are a newer technology that is used for NIR and SWIR applications. It uses quantum dots between a silicon surface and encapsulant to create a 3-D quantum confinement active region to absorb longer wavelengths. While this sensor is low cost and has no temperature dependence, it is unstable and has low sensitivity and low uniformity.

QPD CMOS

SeeDevice's Quantum Photodetector (QPD) CMOS sensors have the ability to see into NIR and SWIR regions, using quantum operational mechanism of tunneling and plasmonic to amplify pixel signals. This allows the sensor to produce higher-quality images with reduced noise at a faster speed than other sensors. It is also more cost-effective, energy-efficient, and easily integrated into imaging systems.

How can CMOS be applied to LiDAR?

Today's autonomous vehicles use LiDAR to scan the vehicle's environment 25 times a second to identify surrounding objects. ToF is used to measure distances between objects so that the car knows when to brake. Using both of these technologies allows cars to move around safely and avoid collisions. While technology is being used in cars now, there are many issues with efficiency because imaging sensors are limited in detecting objects and visibility in various weather conditions. Implementing our CMOS technology into these cameras will drastically improve their visibility by being able to see through weather conditions such as fog and rain as well as accurately detecting objects whereas today's LiDAR systems cannot. We will see future autonomous vehicles implementing this technology into their cameras, decreasing error percentage and price cost.